View URLs blocked by robots.txt, meta robots or X-Robots-Tag directives such as ‘noindex’ or ‘nofollow’, as well as canonicals and rel=“next” and rel=“prev”. This might include social meta tags, additional headings, prices, SKUs or more! Review Robots & Directives Extract Data with XPathĬollect any data from the HTML of a web page using CSS Path, XPath or regex. Discover Duplicate Contentĭiscover exact duplicate URLs with an md5 algorithmic check, partially duplicated elements such as page titles, descriptions or headings and find low content pages. Analyse Page Titles & Meta DataĪnalyse page titles and meta descriptions during a crawl and identify those that are too long, short, missing, or duplicated across your site. Audit Redirectsįind temporary and permanent redirects, identify redirect chains and loops, or upload a list of URLs to audit in a site migration. Bulk export the errors and source URLs to fix, or send to a developer. Find Broken LinksĬrawl a website instantly and find broken links (404s) and server errors.

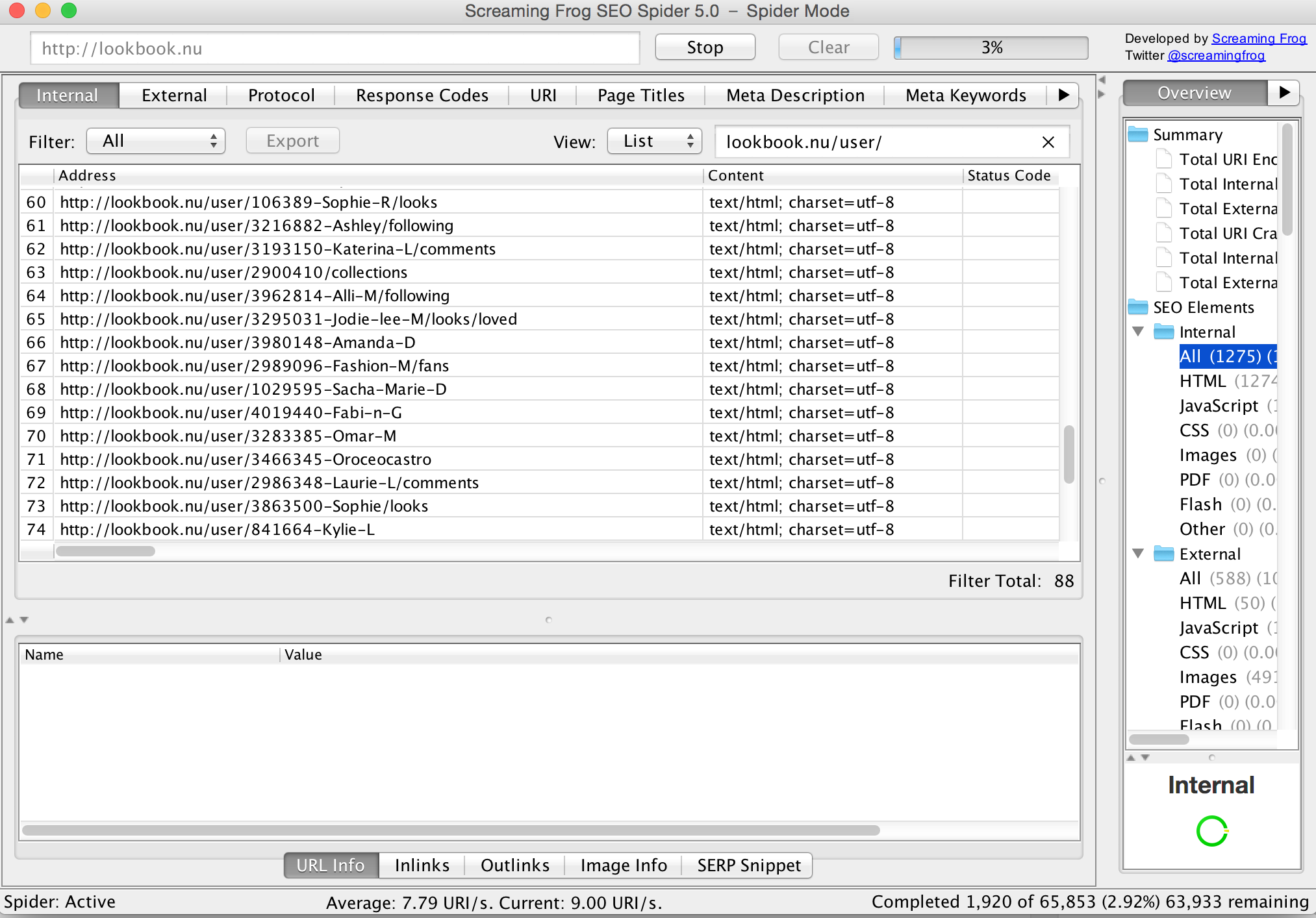

It gathers key onsite data to allow SEOs to make informed decisions. The SEO Spider is a powerful and flexible site crawler, able to crawl both small and very large websites efficiently, while allowing you to analyse the results in real-time. Download & crawl 500 URLs for free, or buy a licence to remove the limit & access advanced features. The Screaming Frog SEO Spider is a website crawler that helps you improve onsite SEO, by extracting data & auditing for common SEO issues.

Download Screaming Frog SEO Spider 19 Screaming Frog SEO Spider

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed